From Simulation to Real-World Deployment Using Digital Twins and AI

At ARENA2036, we are advancing the next generation of autonomous systems by combining artificial intelligence, robotics, and digital twin technologies. As part of the European AI MATTERS Initiative, a novel drone-based framework has been developed using RoboDK to enable intelligent aerial surveillance, inventory monitoring, and logistics automation.

This case study presents a simulation-driven development pipeline, where AI-powered drones are designed, trained, and validated in virtual environments before transitioning to real-world deployment.

Introducing —ARENA2036 and the AI-MATTERS Initiative

ARENA2036 (Active Research Environment for the Next Generation of Automobiles) is a leading research institute in Europe, playing an integral role in the collaboration of research groups and driving scientific excellence using its innovative platform. Located in the manufacturing hub of Stuttgart, it lies at the forefront of advanced technologies and applied research initiatives.

Within its diverse project landscape lies the AI MATTERS Initiative, driving the transformation of European manufacturing industries through AI integration in collaboration with the European Union (EU).

A Digital Twin Approach to Autonomous Drone Systems

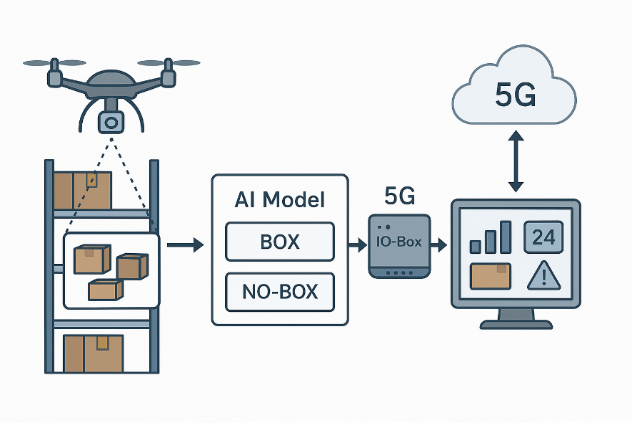

Traditional warehouse operations rely heavily on manual inspection and barcode scanning, limiting scalability and introducing inefficiencies. To overcome these challenges, ARENA2036 developed a digital twin–driven framework, where RoboDK serves as the central simulation environment for:

- Modeling warehouse and shopfloor environments

- Simulating drone navigation and perception

- Validating AI algorithms before deployment

This approach enables rapid iteration, reduces physical risk, and ensures reliable system behavior when transitioning from simulation to real-world environments.

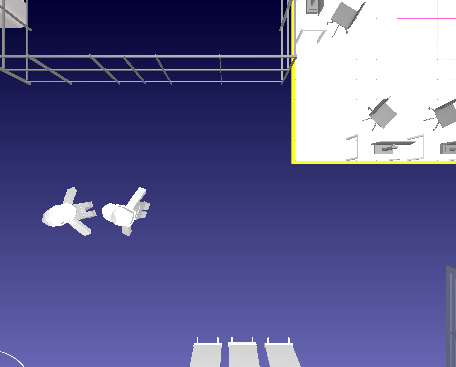

Figure 1. Concept Diagram of the AI-Driven Drone-Based Inventory Management System

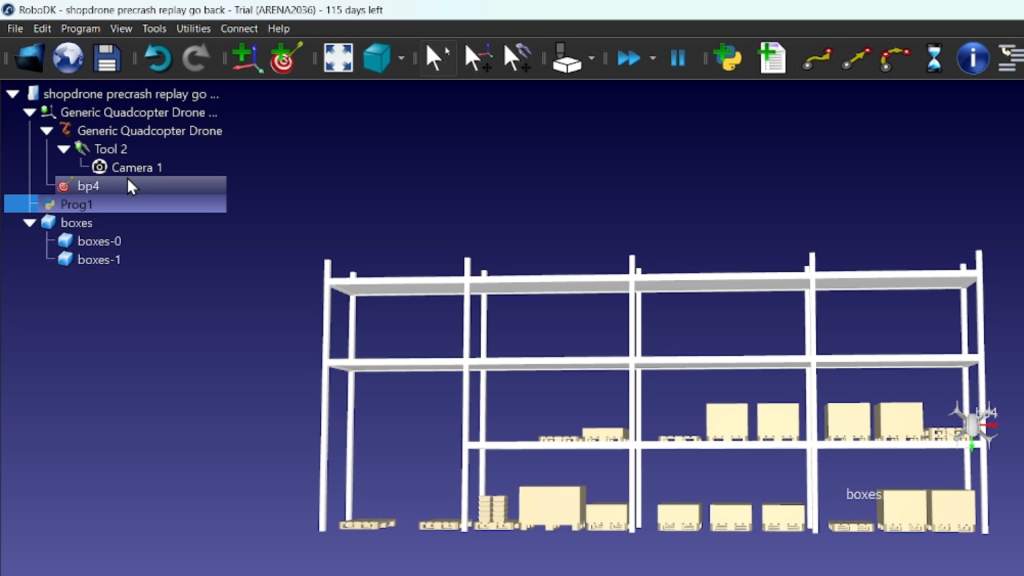

Digital Twin-Driven Development with RoboDK

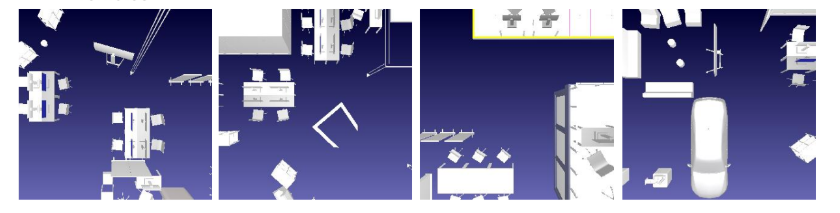

The project begins with the creation of a high-fidelity digital twin of the ARENA2036 shopfloor within RoboDK. This environment serves as the foundation for:

- Aerial surveillance simulation

- Dataset generation for AI training

- Validation of autonomous navigation strategies

As highlighted in the project progress, RoboDK was used to simulate aerial monitoring of the shopfloor, enabling controlled experimentation without physical risk.

Figure 2: ARENA2036 shopfloor and Simulation Framework for Drones

AI-Powered Perception: From Simulation Data to Real-Time Detection

A key innovation of the project is the closed-loop pipeline between simulation and AI training.

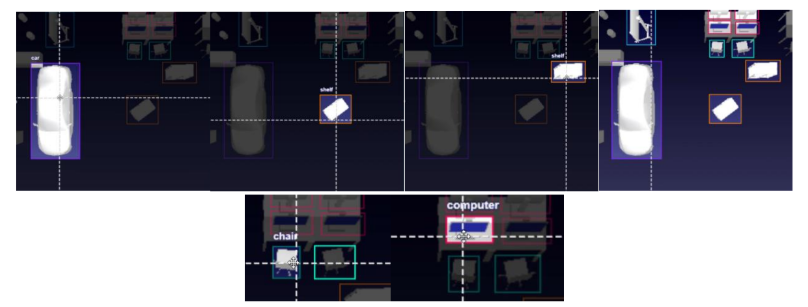

Dataset Generation in Simulation

- A simulated 2D camera mounted on the drone captured aerial images across the shopfloor

- Around 150 images were collected by varying drone positions while maintaining a consistent height

- The dataset included diverse objects such as chairs, shelves, computers, and vehicles

Figure 3: Custom Dataset for Object Detection Model

Annotation and Training

- Images were annotated using Roboflow with multiple object classes

- Dataset expanded through augmentation (144 → 341 images)

- A YOLO-based model was trained for object detection

Figure 4: Annotations and Classes In Roboflow

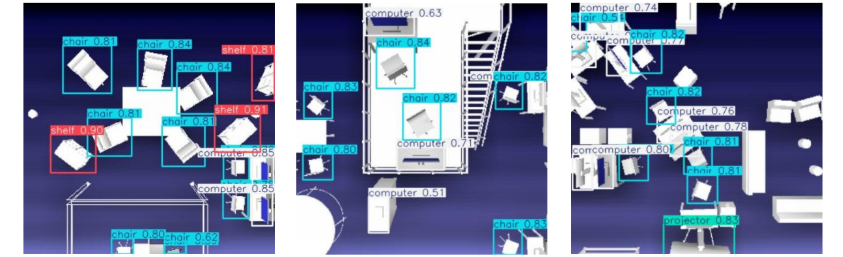

Enhanced Realism

To reflect real-world conditions:

- Human models were added to the environment

- The dataset was extended to include a 7th class (humans)

This enabled:

- Safety-aware perception

- Realistic multi-agent environments

Foundation for hybrid drone–robot systems

Figure 5: Detections made in unseen Test dataset images

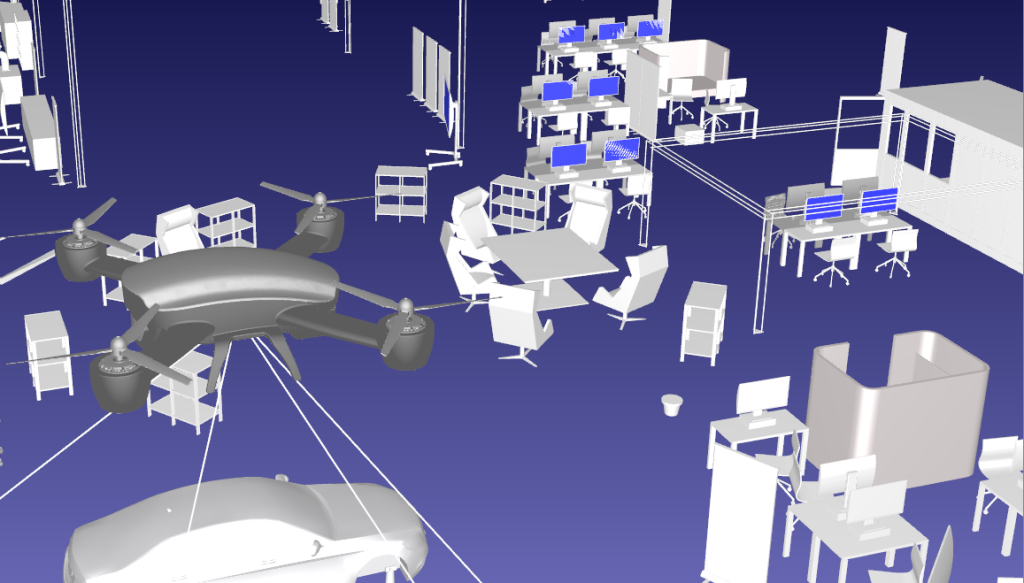

Autonomous Navigation Across Simulation Environments

To ensure both flexibility and realism, the system integrates two complementary simulation environments:

RoboDK: High-Level Task Simulation

- Defines drone trajectories and surveillance paths

- Enables rapid prototyping of mission logic

- Supports integration with perception and AI modules

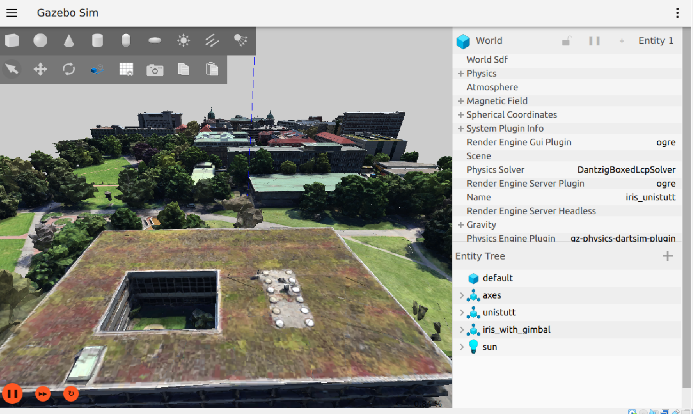

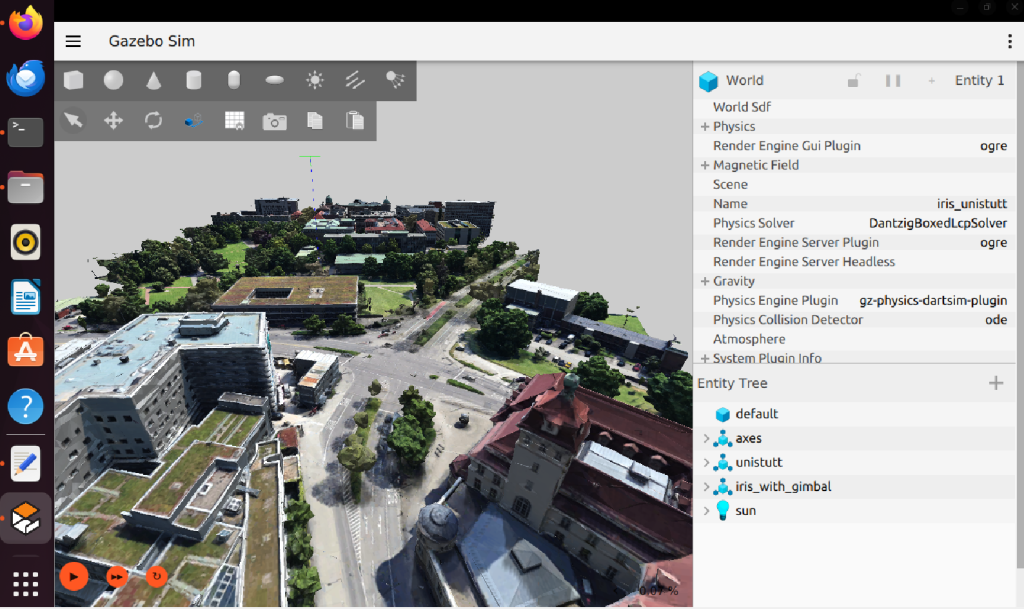

Gazebo + ROS2 + ArduPilot: Physics-Based Validation

- Enables realistic flight dynamics and control

- Supports Software-In-The-Loop (SITL) testing

- Provides map-based navigation and telemetry feedback

This hybrid approach ensures:

- Fast iteration in RoboDK

- Real-world validation in Gazebo

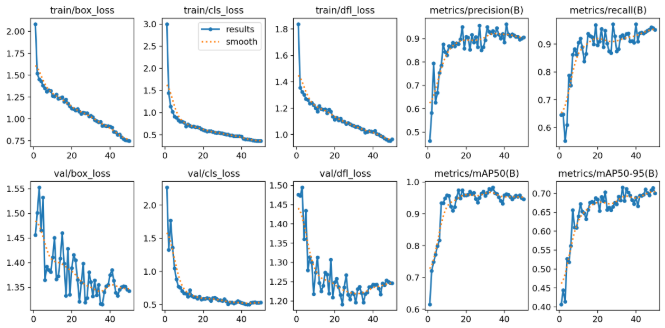

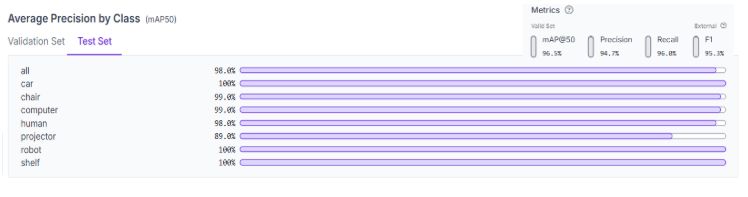

Figure 6: Model Evaluation Metrics Results

The confusion matrix and metrics curves both validate the strong performance of the model. Following the successful training of the model, it was deployed on RoboDK on the drone for the first Shopfloor Surveillance Simulation.

Precision Autonomy: Landing, Detection, and Control

One of the core technical contributions is precision landing using visual markers.

- The drone uses its onboard camera as the primary perception sensor

- ArUco markers are detected using OpenCV

- A pose estimation pipeline computes position via the PnP algorithm

- The drone autonomously switches to landing mode upon detection

This demonstrates how vision-based control can enable fully autonomous flight operations.

Figure 7: Updated Model Training

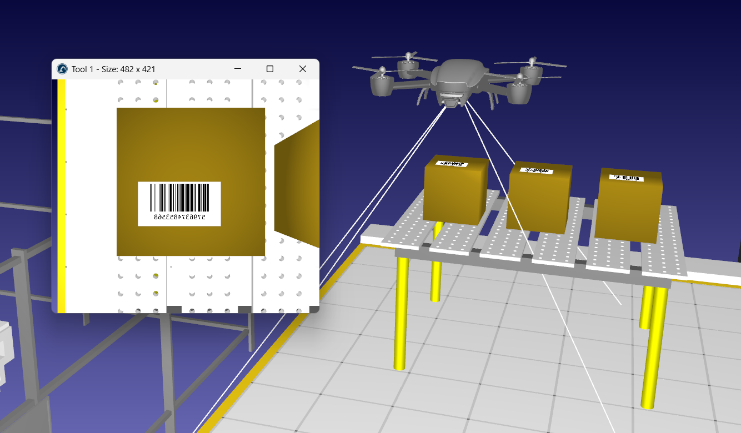

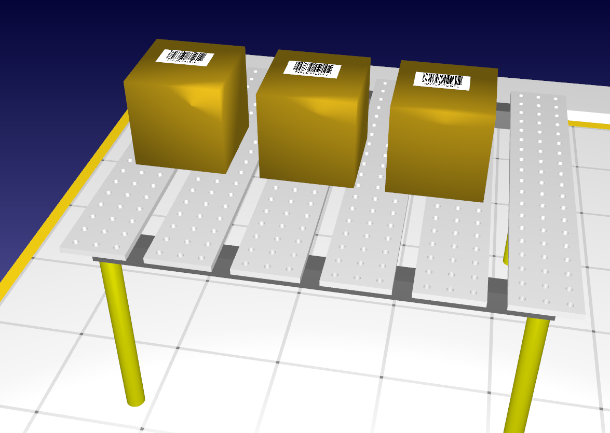

Expanding Capabilities: Inventory and Object Handling

Beyond surveillance, the system extends to logistics automation:

Barcode-Based Object Identification

- Warehouse objects are labeled with unique barcodes

- The drone identifies and tracks items via camera input

- Target-based retrieval enables automated logistics workflows

Figure 8: Warehouse boxes labelled with programmatically generated unique barcodes

Object Transportation

- A palletizing gripper enables pick-and-place operations

- The drone can transport objects to designated delivery zones

These capabilities position the system as a multi-functional aerial robotic platform.

Toward Urban-Scale Autonomous Logistics

The project also explores urban deployment scenarios:

- Real-world locations are converted into 3D models using Blender and mapping tools

- These environments are imported into simulation platforms for testing

- Autonomous takeoff, landing, and navigation are evaluated in realistic city-scale environments

This enables:

- Last-mile delivery use cases

- Traffic monitoring

- Integration with public infrastructure

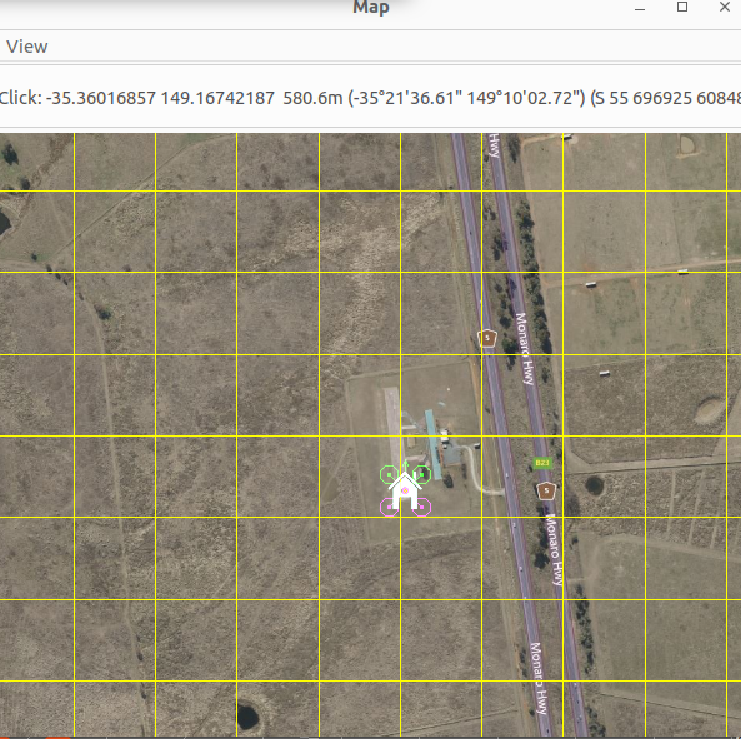

Figure 9: Virtual Simulation Designed for Implementation in Urban Systems

3d Model Generation for Real Location

- The method uses Blender, Renderdoc and MapsModelsImporter add-on to convert locations from Google Maps into accurate models with geographical/structural details largely intact.

- Process injection is performed with renderdoc to capture frames from Google Maps and convert them into Google Maps Capture (.rdc) files using the plugin for visualization in Blender.

Figure 10: Model Generation

Figure 11: Importing Location as Gazebo World SDFChallenges and Ongoing Work

The research highlights several open challenges:

- Ensuring accurate physics and collision modeling in simulation

- Improving dataset balance for consistent AI performance

- Seamless integration between RoboDK and Gazebo environments

- Enhancing realism of drone–environment interaction

Future Vision

The roadmap includes:

- Advanced path planning and obstacle avoidance

- Multi-agent coordination (drone + ground robots)

- Real-time adaptive autonomy

- Deployment in smart cities and industrial ecosystems

Conclusion: A Scalable Framework for Autonomous Aerial Systems

This work demonstrates how RoboDK enables a simulation-first approach to developing intelligent robotic systems, bridging the gap between AI models and real-world deployment.

By integrating:

- AI-driven perception

- Autonomous navigation

- Digital twin simulation

- Real-world validation

ARENA2036 is paving the way for scalable, safe, and intelligent aerial robotics systems that can transform industrial operations and beyond.